Picture this: you're asking your AI assistant to analyse a 100-page document. Behind the scenes, the model is quietly juggling millions of numbers — each one eating up precious memory — trying to hold the entire context of your document in its "working memory" at once. It's a bit like asking someone to memorise a novel before answering your questions. Eventually, it gets expensive. And slow.

This is the reality of running large AI models today. They are extraordinarily capable, but they come with an equally extraordinary price tag — in compute, memory, energy, and speed. Every major AI lab is wrestling with it. And now, Google Research has published something that could mark a genuine turning point.

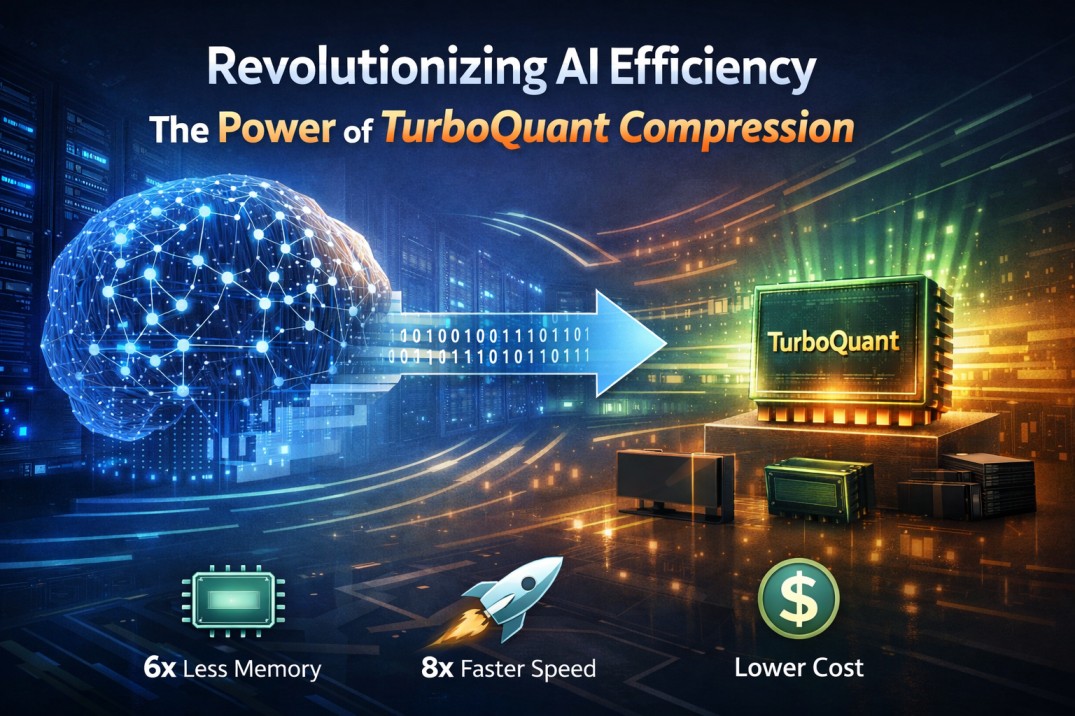

Meet TurboQuant — a new family of compression algorithms that squeezes AI models dramatically smaller, with near-zero loss in quality, and in a way that is mathematically provable. That last part is what makes it genuinely extraordinary.

Visual 1 — The core problem

How the KV cache grows with context length — and where traditional methods waste memory on overhead metadata.

Before we dive in: what even is compression?

Think of a high-resolution photograph. In its raw form, it might be 25 megabytes — perfect detail, every pixel captured. But when you share it on WhatsApp or Instagram, your phone compresses it to maybe 2 megabytes. Most of the time, you can't even tell the difference.

AI models work similarly. They store information as enormous tables of numbers — millions or billions of them — each expressed with extraordinary precision (32 decimal places, in some cases). Compression means representing those same numbers with much less precision, shrinking the file dramatically, while keeping the model's intelligence intact.

The hard part? Do it badly and the model gets dumber. Do it well and nobody notices the difference.

A 70-billion parameter AI model needs roughly 280 GB of memory to run in full precision. That's more RAM than most high-end servers have. Compress it smartly, and you can run it on a single, affordable GPU.

The problem everyone has been trying to solve

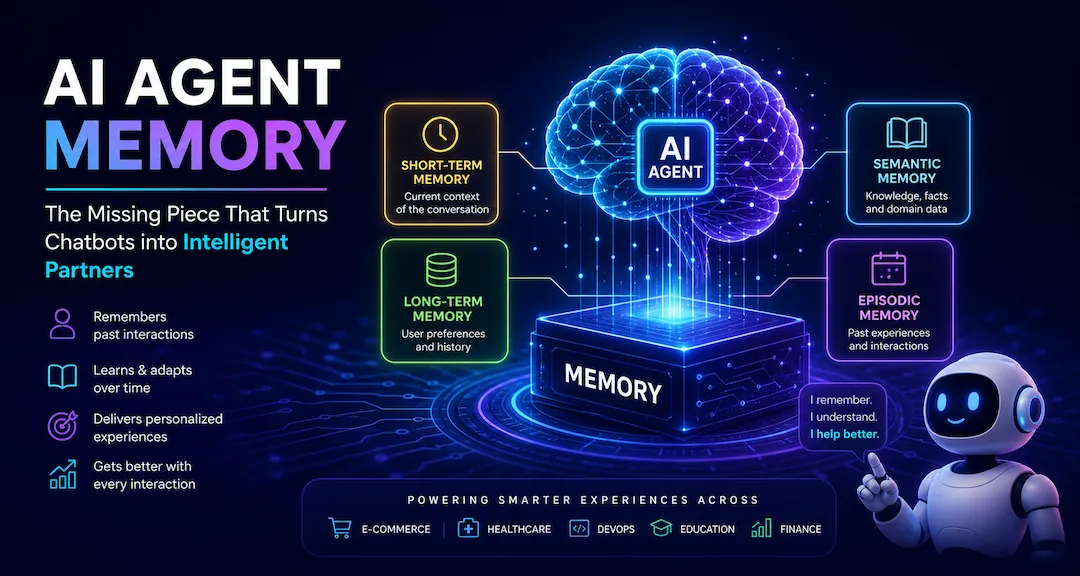

Modern AI models like Gemini, GPT-4, and Llama rely on something called a key-value (KV) cache — essentially a fast-access scratchpad where the model stores context as it processes a long conversation or document. The bigger the context (more text, longer conversations, larger documents), the bigger this cache gets.

Compressing the KV cache sounds straightforward, but there's a nasty catch: traditional compression methods introduce their own hidden overhead. Every time you compress a block of data, you need to store metadata about how you compressed it — a kind of "decoder ring." This overhead can add an extra 1–2 bits per number, partially cancelling out the savings. It's like packing your suitcase only to need a second bag for all the packing supplies.

TurboQuant solves this elegantly. It eliminates the overhead problem entirely.

What TurboQuant actually does

Google's approach is built on three interlocking algorithms: TurboQuant, PolarQuant, and QJL (Quantized Johnson-Lindenstrauss). Together, they form a two-stage compression pipeline.

Instead of describing a data point using standard X/Y/Z coordinates, PolarQuant switches to polar coordinates — think "5 blocks at a 37-degree angle" instead of "3 blocks east, 4 blocks north." Because the angular spread of AI data is highly predictable, this eliminates the need to store those expensive "decoder rings" for each block. No overhead. Just clean, precise compression using most of the available bits.

No compression is perfect — there's always a tiny residual error. QJL uses a mathematical technique called the Johnson-Lindenstrauss Transform to catch and correct this residual using just 1 single bit. That's it. One bit acts as a mathematical error-checker that eliminates bias, giving the model a significantly more accurate attention score at almost no cost.

Visual 2 — The PolarQuant insight

Cartesian coordinates require a "decoder ring" stored per block. Polar coordinates are self-describing — the circular grid boundary is always known, so no metadata is needed.

Visual 3 — The two-stage pipeline

TurboQuant's two-stage pipeline: PolarQuant handles the heavy lifting, QJL mops up the error with a single bit.

"TurboQuant achieves a 6× reduction in KV cache memory while maintaining perfect accuracy on long-context benchmarks."

The numbers that matter

Testing across standard AI benchmarks — including question answering, code generation, document summarisation, and the notoriously tricky "needle in a haystack" task (finding one specific detail buried in thousands of pages of text) — TurboQuant matched the performance of uncompressed models while using a fraction of the memory.

How does it compare to what came before?

TurboQuant didn't emerge in a vacuum. The field of AI compression has been evolving rapidly, and several strong approaches already exist. Here's how they stack up:

| Method | Approach | Zero overhead? | Accuracy | No retraining? |

|---|---|---|---|---|

| TurboQuant (Google) | Polar coords + JL transform | Yes | Near-perfect | Yes |

| GPTQ | Row-wise Hessian-guided quantization | Partial | Very good | Yes |

| AWQ | Activation-aware weight quantization | Partial | Excellent (~95%) | Yes |

| SmoothQuant | Channel-scaling for activations | Partial | Good (8-bit) | Yes |

| QLoRA / QAT methods | Quantization during fine-tuning | No | Excellent | Needs training |

What sets TurboQuant apart is the combination. Most methods make trade-offs: either they need retraining (expensive), or they introduce overhead (inefficient), or their accuracy guarantees are empirical rather than provable. TurboQuant threads this needle — it is provably optimal in an information-theoretic sense.

The distinction matters: empirical results can be lucky. Theoretical guarantees hold even in edge cases, on new models, at new scales. That's the difference between a clever trick and a lasting foundation.

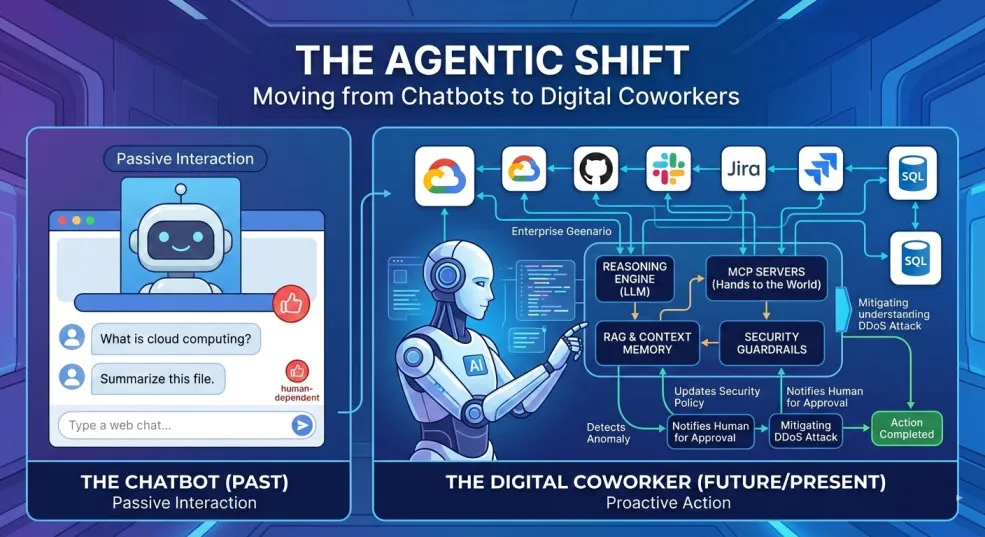

Why this is a genuine game-changer

Let's make this concrete. If you're a developer, this could mean running a powerful model on a single GPU that previously required a $50,000 multi-GPU cluster. If you're a business deploying AI, it means dramatically lower cloud costs and faster responses. And if you're just a user — this is why AI assistants in the future will be faster, cheaper, and more capable of handling very long contexts.

Less memory means fewer and cheaper GPUs. For companies running AI at scale, the infrastructure savings are significant and immediate.

An 8× speedup in attention computation is the difference between an AI that responds in one second and one that responds in eight. For real-time applications — coding assistants, customer service bots, voice agents — this is transformative.

Because TurboQuant shrinks the KV cache, models can now hold much longer conversations, analyse much larger documents, and maintain much richer context — all within the same memory budget.

Compression techniques that reduce memory and compute requirements directly reduce energy consumption — making AI development more sustainable without sacrificing capability.

Visual 4 — The impact map

TurboQuant sits at the infrastructure layer, simultaneously improving the KV cache, vector search, and GPU memory utilisation — unlocking cost, speed, and context gains downstream.

This isn't just engineering — it's mathematics

Perhaps the most underappreciated aspect of TurboQuant is that it isn't a clever hack. It's a theorem. The algorithms operate near theoretical lower bounds — meaning there's a mathematical proof that you cannot do meaningfully better, given the same number of bits. Google didn't just build a faster car; they proved that this is close to as fast as a car of this size can go.

This matters enormously for reliability. When you deploy AI in hospitals, financial systems, or legal applications — you need guarantees, not just impressive benchmark scores. TurboQuant provides that mathematical bedrock.

What comes next

Google has flagged that while the primary application is solving KV cache bottlenecks in models like Gemini, the same techniques apply to vector search — the backbone of how modern search engines find semantically similar content across billions of documents. As search evolves from keyword-matching to meaning-matching, efficient vector quantization becomes critical infrastructure.

Expect to see TurboQuant's influence ripple outward: into how AI models handle longer contexts, into how search engines scale, and into how AI gets deployed on smaller, cheaper, more accessible hardware — eventually including your phone.

The race to make AI cheaper and faster has many players. But Google's approach — grounding the solution in mathematics rather than heuristics — gives TurboQuant a durability that empirical methods often lack. It's not just a step forward. It's a new floor.