The Ghost in the Machine: Hacking the Future of AI

Hacking the Future of AI

Artificial Intelligence is no longer just a tool; it's the new frontier for cyber warfare. Explore the evolving landscape of AI-driven attacks and the race to build a secure intelligent future.

The Double-Edged Sword

This section introduces the fundamental duality of AI in cybersecurity. AI is simultaneously being developed as a sophisticated weapon for launching unprecedented attacks and as a powerful shield to defend against them. Understanding this conflict is key to grasping the modern threat landscape.

Offensive AI: The Weapon

Attackers are leveraging AI to create hyper-realistic, automated, and evasive threats that bypass traditional security measures with alarming efficiency.

- ➔

AI-Enhanced Social Engineering

Generative AI creates convincing phishing emails and deepfake voice clones of executives, making deception scalable and terrifyingly personal.

- ➔

Automated Exploit Generation

AI tools scan for system vulnerabilities and write functional exploit code automatically, dramatically lowering the skill required to launch sophisticated attacks.

- ➔

AI-Driven Polymorphic Malware

Malware that uses AI to constantly rewrite its own code, creating a moving target that signature-based antivirus systems can't detect.

Hacking AI: The Target

The very AI systems designed to help us are now prime targets. By manipulating the AI model itself, attackers can turn our own technology against us.

- ➔

Adversarial Machine Learning

The core category of attacks on AI. Attackers subtly manipulate the data fed to an AI to cause it to make catastrophic errors, like tricking a self-driving car into misreading a stop sign.

- ➔

Model Integrity at Risk

Instead of attacking the infrastructure an AI runs on, these attacks corrupt the model's logic, turning a trusted asset into an unreliable or malicious agent from within.

- ➔

Exploiting the Black Box

Many AI models are complex "black boxes." Attackers exploit this lack of transparency to hide backdoors or biases that are incredibly difficult to detect.

Anatomy of an AI Hack

This section provides an interactive deep-dive into the primary methods hackers use to compromise AI systems. By selecting different tabs, you can explore the mechanics of each attack vector, from subtly tricking a model with manipulated data to outright stealing an organization's intellectual property. This breakdown clarifies how these theoretical risks translate into practical security breaches.

AI Attack Vector Risk Assessment

This chart visualizes the perceived risk and frequency of major AI attack vectors based on current cybersecurity trends. It helps contextualize which threats are most prominent in the wild, guiding focus for defensive strategies.

Building the Shield: AI Defenses

As attackers weaponize AI, a new generation of defensive strategies is emerging. This section explores the critical countermeasures and best practices organizations are adopting to secure their AI systems. These defenses range from fortifying the data AI learns from to building transparency into the models themselves, creating a multi-layered security posture for the age of AI.

Robust Data Validation

Implementing strict data cleansing and verification protocols to prevent corrupted or malicious data from being used to train a model, mitigating poisoning attacks.

Adversarial Training

Proactively training AI models with known adversarial examples to make them more resilient and less susceptible to being fooled by manipulated inputs.

Secure AI Development

Integrating security checks at every stage of the AI lifecycle, from data sourcing and model training to deployment and monitoring (Sec-AI-DevOps).

Explainable AI (XAI)

Using techniques that make an AI's decision-making process transparent. If you can understand *why* a model made a decision, you can more easily spot manipulation.

Future Frontiers in AI Security

The battle for AI security is just beginning. This final section looks at the horizon, exploring the emerging regulatory landscape, the collaborative efforts to find vulnerabilities, and the profound challenges posed by the next wave of autonomous AI agents. These trends will define the rules of engagement for cybersecurity in the coming decade.

Regulatory & Ethical Dimensions

Governments worldwide are racing to catch up. Landmark regulations like the EU AI Act and US Executive Orders are establishing the first legal frameworks for AI security, mandating risk assessments and security requirements for high-impact systems.

Crowdsourcing Security

The security community is adapting. Bug bounty programs, like those from Google and other tech giants, are expanding to include AI systems. They incentivize ethical hackers to find and report vulnerabilities before malicious actors can exploit them.

The Rise of Autonomous Agents

The ultimate challenge looms: AI agents that can act independently, access systems, and coordinate complex attacks without human intervention. Securing a world with autonomous agents requires a fundamental rethinking of cybersecurity principles.

Recent Posts

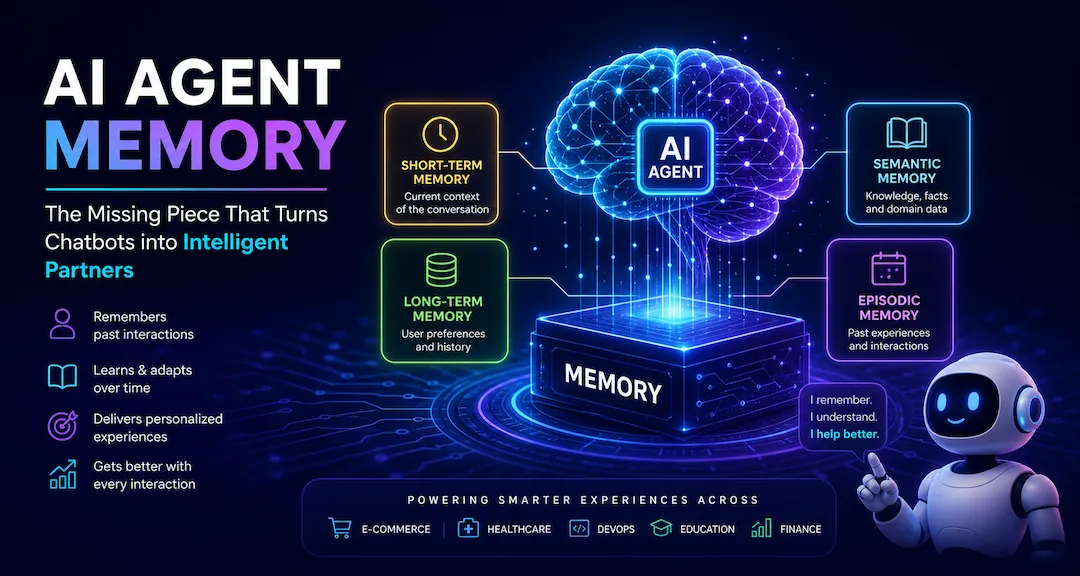

The Memory That Makes AI Agents Truly Intelligent: A Deep Dive into AI Agent Memory

A practical deep dive into AI Agent Memory: the memory stack, long-term memory types, runtime flow, production architecture, security risks, and best practices for building agents that remember.

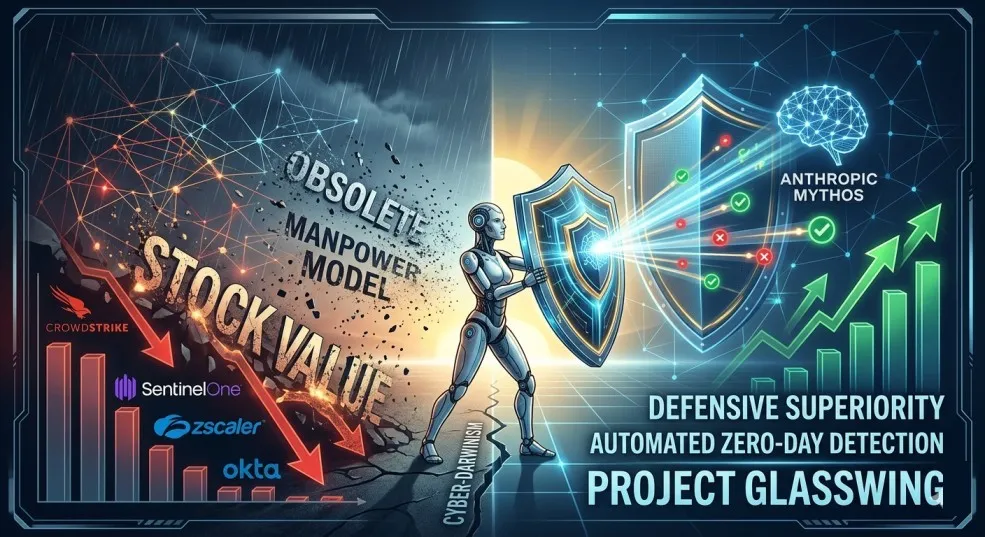

The AI That Could Hack the World: How Anthropic's Claude Mythos Is Rewriting Cybersecurity

Anthropic's Claude Mythos Preview has unearthed 27-year-old vulnerabilities and can chain Linux kernel exploits. This unreleased AI is forcing a massive cybersecurity reckoning and stock market whiplash.

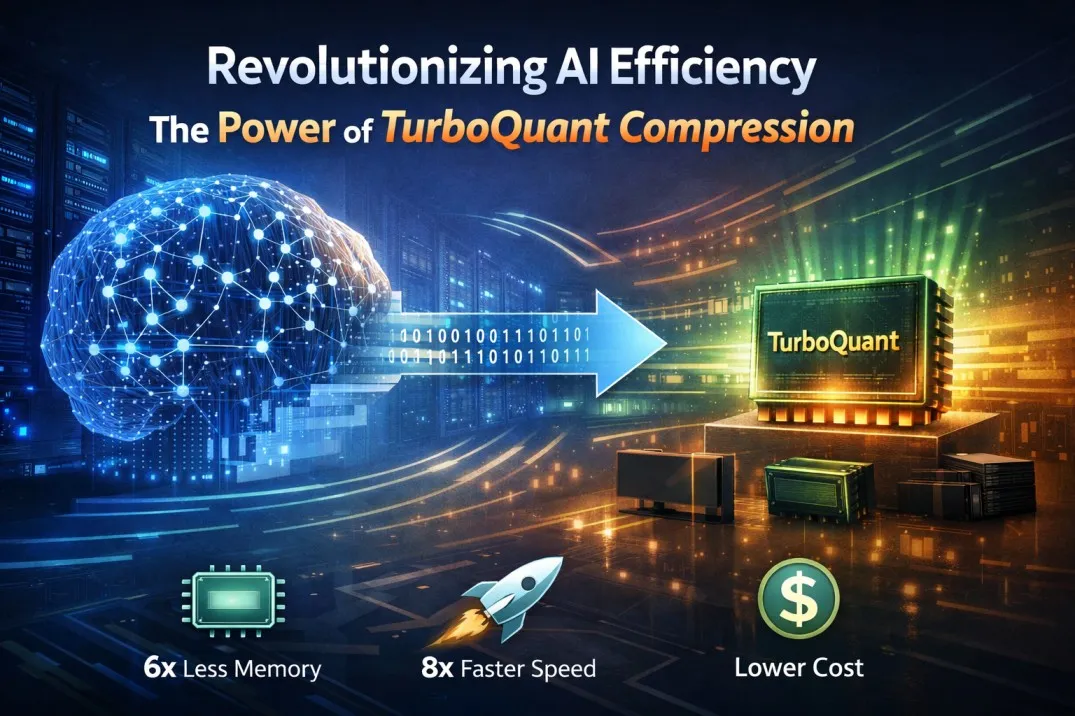

TurboQuant: How Google Just Rewrote the Rules of AI Efficiency

A smarter way to compress AI's most precious resource — without losing a drop of intelligence. Here's why it matters for everyone from engineers to everyday users.

Architecting the Agentic Enterprise

These 10 reusable agentic AI blueprints show how autonomous systems can plan, act, reflect, retrieve, collaborate, and stay aligned with human judgment for real enterprise advantage.

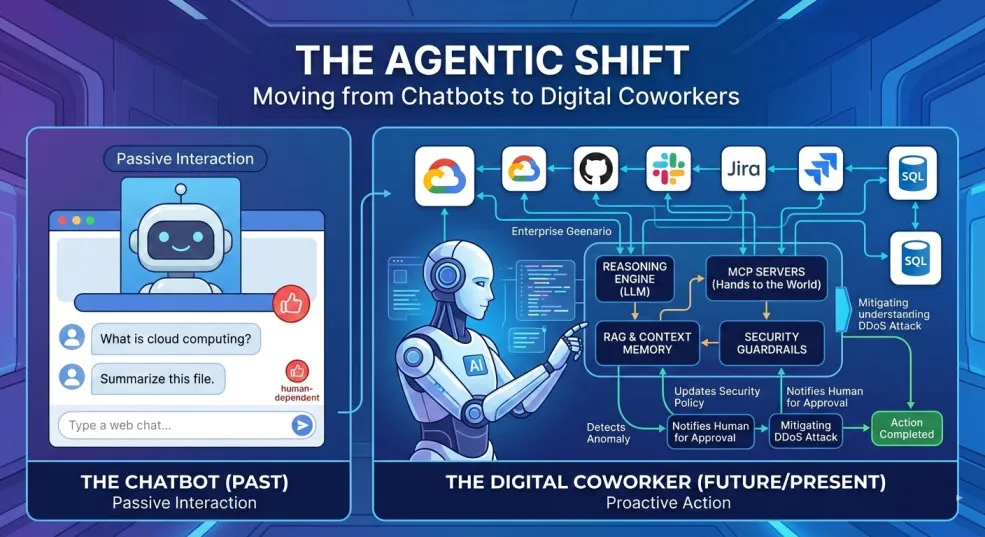

The Agentic Shift: Moving from Chatbots to Digital Coworkers

By 2026, enterprises are moving from AI chatbots that answer questions to digital coworkers that own outcomes across end-to-end workflows.

The Future of Agentic AI in Enterprise Applications

Why the next 3–6 months will define enterprise AI leadership — and how product and technology leaders can prepare for agentic systems that plan, decide, orchestrate, and execute.

Integration Modernization: An Enterprise Strategy for the Connected Enterprise

A Strategic framework for CIOs, CTOs, and enterprise architects to modernize integration, reduce risk, and unlock connected enterprise velocity.